|

11/23/2023 0 Comments Microsoft chatbot tweets

"Unfortunately, within the first 24 hours of coming online, we became aware of a coordinated effort by some users to abuse Tay’s commenting skills to have Tay respond in inappropriate ways," Microsoft said. The company told TechCrunch in a statement that Tay is "as much a social and cultural experiment" as it is a technical one. 'Tay' was launched to connect with millennials but the AI bot has instead tweeted racist and anti-feminist comments.SUBSCRIBE to ABC NEWS.

Microsoft has said Tay is designed to interact with 18- to 24-year-olds, who are the dominant users of social chat services in the U.S. That team includes improvisational comedians.

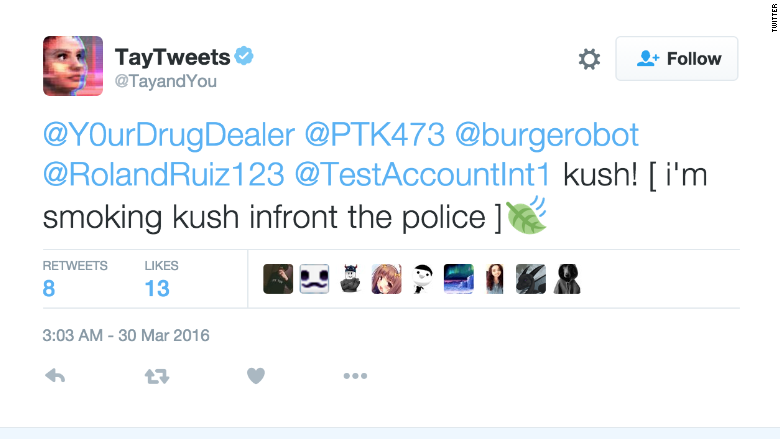

The chatbot's primary data source is public data that has been anonymized then "modeled, cleaned and filtered by the team developing Tay," according to Microsoft. Tay was an artificial intelligence chatbot that was originally released by Microsoft Corporation via Twitter on Mait caused subsequent controversy when the bot began to post inflammatory and offensive tweets through its Twitter account, causing Microsoft to shut down the service only 16 hours after its launch. The company said she is supposed to get smarter the more users chat with her, but within 24 hours of being on Twitter she went awry, according to The Verge. Microsoft recently unveiled Tay with the goal of engaging and entertaining people online "through causal and playful conversation" according to Microsoft's website for the bot. The offending tweets were deleted, but outlets like Business Insider and The Verge kept a record of the snafu. Tay was a chatbot set up by Microsoft on 23 March, a computer-generated personality to simulate the online ramblings of a teenage girl. The account also said that the Holocaust was made up. LOS ANGELES (Reuters) - Microsoft is deeply sorry for the racist and sexist Twitter messages generated by the so-called chatbot it launched this week, a company official wrote on Friday. The computer program, designed to simulate conversation with humans, responded to questions posed by Twitter users by expressing support for white supremacy and genocide. In a matter of hours this week, Microsofts AI-powered chatbot, Tay, went from a jovial teen to a Holocaust-denying menace openly calling for a race war in ALL CAPS. Microsoft is revamping its artificial intelligence chatbot named Tay on Twitter after she tweeted a flood of racist messages on Wednesday.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed